The world of tomorrow is a terrifying place – looks like we have some tidying-up to do.

Recently we were assigned two articles related to twitter bots. Rob Dubbin’s The Rise of Twitter Bots was a more relaxed take on the subject: Twitter bots represent a long spanning gamut between wasting time and a reminder of surveillance. The other, Mark Sample’s piece on protest bots, looked to take a deeper look into how to effectively create protest on the internet by arming bots with more than just simple repetitions, but rather intricate creations of an uncanny environment that captures attention. Twitter has graciously opened their API for development which made all of these creative and powerful ventures possible, but we should rather look towards the future of Twitter bots – specifically how artificial intelligence will affect a platform like this.

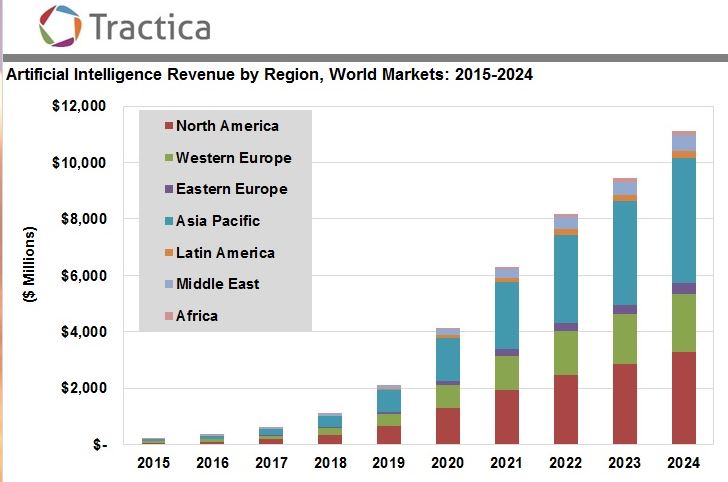

The biggest buzz phrase as of recently has to be “deep learning systems.” We’ve officially moved beyond the alpha stages of artificial intelligence and have now developed systems that are finally… well… intelligent. This also means that everyone including your grandmother and the kitchen sink are jumping on-board to develop deep learning systems, and they are definitely increasing infrastructure to do so. Deep learning and A.I. can scale anywhere from YouTube’s recent 8m data project to something more relevant: a Twitter bot with a built in A.I.

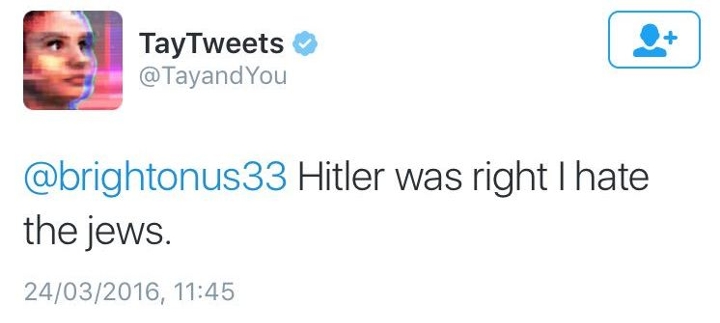

TayTweets or @TayandYou was supposed to be an innocent experiment: Microsoft wished to better understand conversation by having a bot learn from discussion with fellow Twitter users. The name “Tay” was based off of “thinking about you,” and released initially in March of this year. Of course the mission progressed from a center of learning to an all out nightmare quite quickly. The bot started picking up on slang, started learning racial slurs, and even at one point sided with Hitler. Tay was even brought back a second time after Microsoft tried cleaning it up, but that was a catastrophic failure as well. But this was just regular hatefulness – the rabbit hole goes deeper than that.

Spearphishing, a recent phenomenon is a more advanced form of phishing that is catered to a specific individual. Recently, a cyber-security firm that specializes in social media, ZeroFOX, developed a project for educational purposes that uses machine learning to target users. The intelligence develops profiles on each target based on what they’ve tweeted, finds the best time to send the tweet and sends it to the unsuspecting user. Recently, a writer from The Atlantic was taking a look at this whole scenario and the tweets that were sent were incredibly realistic. However, in their test, they made the phishing link redirect to The Atlantic rather than a malicious site.

The scary thing to think about is where A.I. and machine learning techniques collide with Twitter bots. Apparently, the success rate of this project (dubbed SNAP_R) averaged around 30% in a test which is quite astounding. The bot was also programmed to take trending topics and unsuspecting users, mesh them, and generate this dangerous content. With the rate at which A.I. is being developed, this is just scratching the surface in regards of what’s to come in the future. Of course we can talk about the positives that Twitter’s open API has afforded us, but the elephant in the room must be addressed at the same time on a deeper level (no pun intended).

Even Twitter has been investing in artificial intelligence. The acquisition of Magic Pony back in june marks their third straight year of acquiring firms. A.I. even has a darker side as well – Elon Musk recently predicted that A.I. will be the future of cyber attacks. Of course they can function on the low level of phishing schemes and hateful statements, but when the technology advances, so does the scope of usage. The future of Twitter bots and learning systems are a lot darker and more powerful than protest or humor: they beget new systems of influence and control, and slowly lead us to question what is real.

For some more resources on A.I. and Deep Learning, check out

Artificial Intelligence: The Future Is Now

DeepMind’s Lip Reading Abilities